Not So Fast: AI Coding Tools Can Actually Reduce Productivity by Steve Newman is a detailed response to METR’s Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity study. The implied conclusion is AI tools decrease productivity by 20%, but this isn’t the only conclusion, and more study is absolutely required.

[This study] applies to a difficult scenario for AI tools (experienced developers working in complex codebases with high quality standards), and may be partially explained by developers choosing a more relaxed pace to conserve energy, or leveraging AI to do a more thorough job.

– Steve Newman

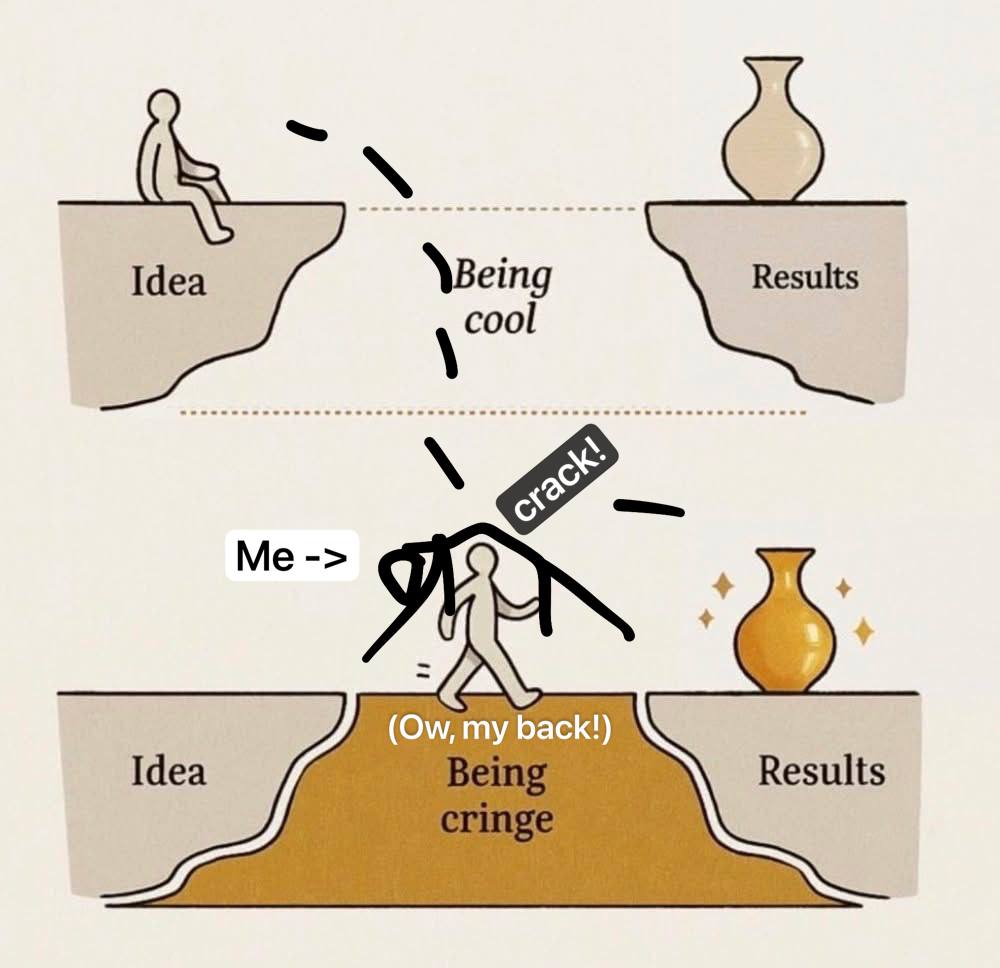

Under the section Some Kind of Help is the Kind of Help We All Can Do Without is exactly what I’d expect: The slowdown is attributable to spending a lot of time dealing with AI output being substandard. I believe this effect can be reduced by giving up on AI assistance faster. In my experience, AI tooling is best used for simple tasks when you verify the suggested code/tool usage by reviewing manuals/guides, or when you only use the output as a first-pass glance to see what tools/libraries you should look up to better understand options.

To me, it seems that many programmers are too focused on repetitively trying AI tools when that usually isn’t very effective. If AI can’t be coerced into correct output within a few tries, it usually will take more effort to keep trying than to write it yourself.

I wrote the following from the perspective of wanting this study to be false:

There are several potential reasons for the study results to be false, and these pitfalls were accounted for, but I feel some arguments were not well-supported.

- Overuse of AI: I think the reasoning for why this effect wasn’t present is shaky because it reduced the sample size significantly.

- Lack of experience with AI tools: This was treated as a non-issue, but relying on self-reporting to make that determination, which is generally unreliable (which was pointed out elsewhere). (Though, there was not an observable change over the course of the study, indicating changing experience is unlikely to affect the result.)

- Difference in thoroughness: This effect may have influenced the result, but there was no significant effect shown either way. This means more study is required.

- More time might not mean more effort. This was presented with nothing to argue for or against it – because it needs further study.

(The most important thing to acknowledge is that it’s complex, and we don’t have all the answers.)

Conclusions belong at the top of articles.

Studies are traditionally formatted in a way that leaves their conclusions to the end. We’ve all been taught this for essay writing in school. This should not be carried over to blog posts and articles published online. I also think this is bad practice in general, but at least online, where attention spans are their shortest, put your key takeaways at the top, or at least provide a link to them from the top.

Writers have a strong impulse to save their best for last. We care about what we write and want it to be fully appreciated, but that’s just not going to happen. When you bury the lead, you are spreading misinformation, even if you’ve said nothing wrong.

Putting conclusions at the end is based on the assumption that everyone reads the whole thing. Almost no one does that. The majority look at a headline only. The next 99% only read the beginning, and the next group doesn’t finish it either. A minority finishes reading everything they start, and that’s actually a bad thing to do. Many things aren’t worth reading ALL of. Like this, why are you still reading? I’ve made the point already. This text is fluff at the end, existing to emphasize a point you should already have understood from the rest.